Extension of an existing app to assist in the intervention of dementia using AI

User Research, UX/UI Design, Prototyping, AI Prompting

October 2025

Adrienne Luong, Anton Kouznetsov, Jasmine Dacanay, Long Le

Dorsa, Faith, Justine, Minju

25 hours (75 total team hours)

Focusing on assisting with recall, the existing Mnemo app extends to smart glasses and smart watch interfaces. Our team aimed to identify opportunities to build on the smart glasses component that was handed off to us while also experimenting with AI prompting to test its effectiveness in the design process.

Based on our research, we highlighted a specific target audience and aspect of dementia that we could lean into.

“Physical inactivity is highly associated with the increased risk of developing dementia”

“Repetitive aerobic exercises three to five times a week was found to have a positive effect”

"A 6-month exercise program can promote positive proxy-rated outcomes on functional capacity and BPSD in institutionalized older adults with mild to moderate dementia"

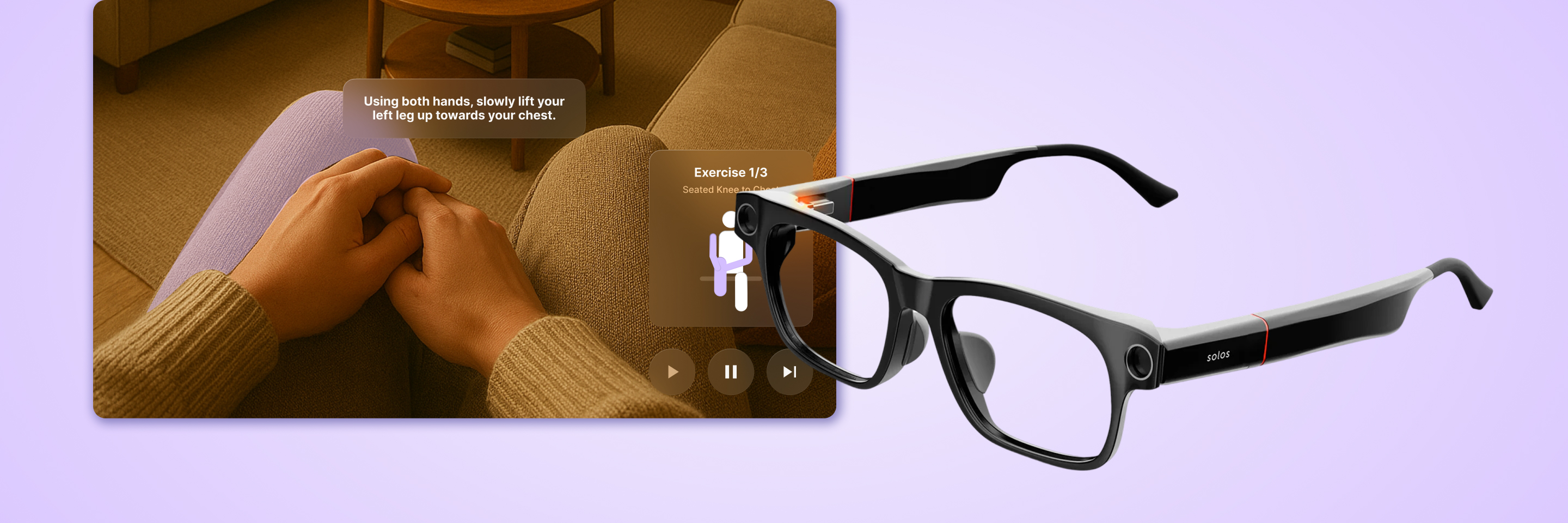

The smart glasses enables users to talk directly to the AI and navigate the interface through the paired wristband.

Real-time feedback and indicators like the body pictogram and highlighted body part ensures users are effectively performing their physical exercises.

Audibly speaking to the glasses or making changes on the wristband enables users express their thoughts and emotions, allowing the AI to adapt to their needs. Users can stop exercises, adjust them, and start breathing exercises to regulate their wellbeing.

Addressing the need for technology that isn't too complex or overwhelming, the interface is simple and straightforward. Instead, the interface relies on existing mental models and pattern recognition through familiar icons and consistent button placement.

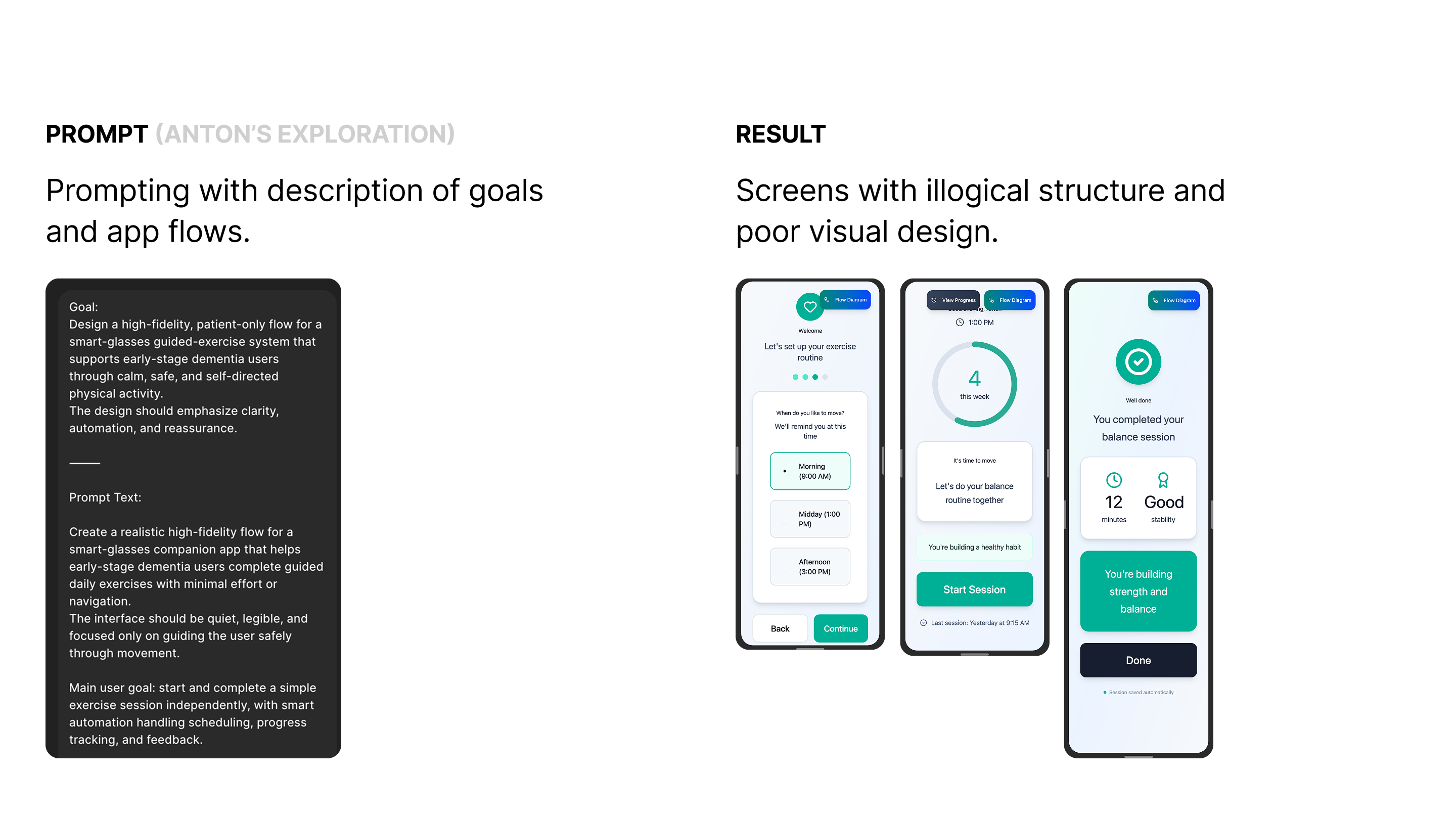

Through prompting Figma Make, I explored how I could best prompt it to generate ideas for the smart glasses interface. While ideating for the guided exercise flow, I went through iterations of different screen designs which showcased the abilities and limitations of the AI.

First stepping into Figma Make, my team and I took different approaches to prompting the AI, which resulted in greatly varying results.

Longer and more detailed prompts for specific features did not produce good results.

Figma Make produced better results when

given an objective or feeling rather than specific technical logic or complex directions.

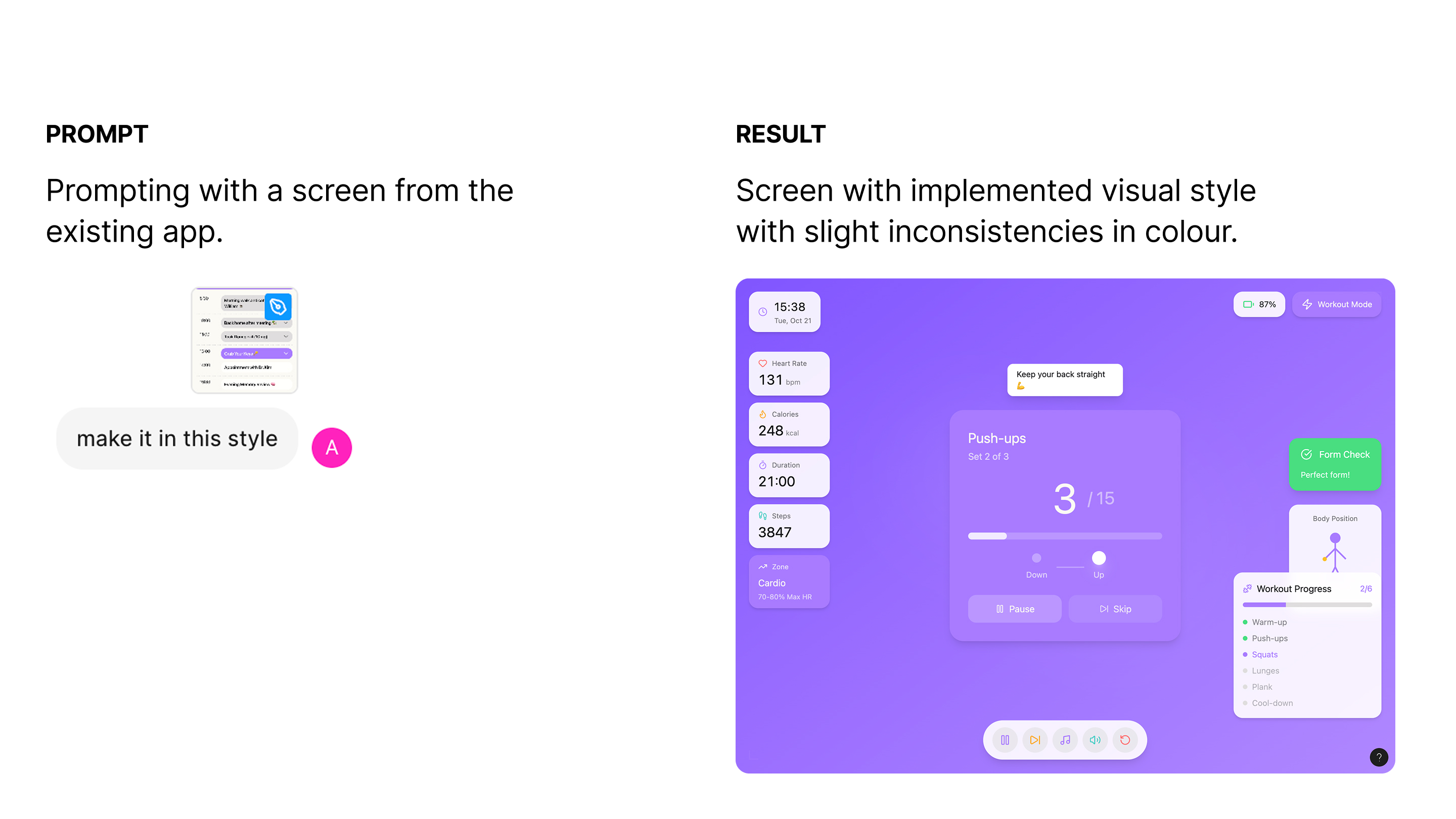

We also wanted to test out Figma Make's visual capabilities through features like uploading reference screens or components.

Adding a reference screen when prompting made the AI more confident with your vision, but still had UI errors.

Once prompted it tries to stick to the same general design permanently unless prompted otherwise.

I also tested if the screen could be made responsive. Taking an existing horizontal screen, I applied the mobile view function.

You need to specify what screen type you are designing for within the initial prompt.

The less changes in a single prompt the higher the chance of success.

After generating some screens, I looked through the designs to highlight features that had potential. These ultimately informed the design of the guided exercise flow.

Feedback from users revealed the need for less text and leaning into seniors' tendency to rely on patterns when using technology.

Through experimentation it is evident Figma Make still has a long way to go to before it can make viable end screens. However, prompting it sparked interesting ideas that I believe can help designers with the ideation process.

Going forward, I would like to continue testing what prompts work well with this AI tool especially as it continues to develop.